How to estimate size and health of high frequency code iterations using the delta analysis feature

The “Application Trends” feature (also known as delta analysis) dramatically increases the value of using Highlight in an Agile context. In a nutshell, Highlight now computes software health scores and metrics of scanned source files based on their status, whether they have been added or modified during the last iteration. This post will explain how to work with this feature.

Added/Modified files vs. the cold part of the software

The mechanic behind the feature is pretty simple. For each file Highlight scans, a unique signature (a CRC – Cyclic Redundancy Check – technically speaking) is calculated. When a new scan occurs for the same file (same name and path), Highlight calculates a new signature. If both signatures are identical, it means that the file hasn’t changed between the first and the second scans. If the signatures are different, it means that the file has been modified between the scans. If a file wasn’t there in the first scan, it obviously means it has been added between the two scans.

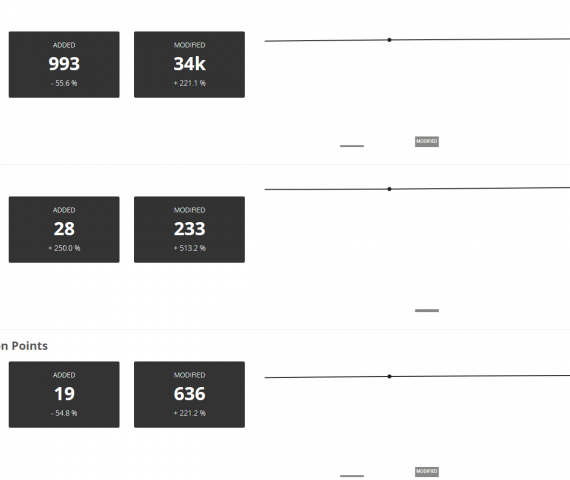

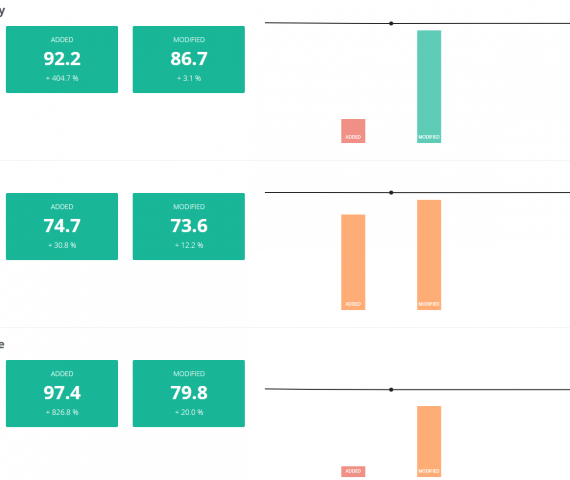

Using the specific scope of added and modified files between two sprints/iterations/scans, Highlight computes the different KPI scores for each file status category: Software Resiliency, Agility, Elegance, Lines of Code and Back-Fired Function Points.

How to use Delta Analysis in Agile-driven code bases

You now see that the 28 new files that have been introduced in the codebase during the last sprint was made of 993 lines of code (equivalent to a total of 19 BFPs) and that they had very high scores in Resiliency (92.2 out of 100) and Agility (74.7), but also that the development team took advantage of working on existing files to reduce code complexity (Software Elegance on modified files is 79.8, improved by 20% compared to the last scan). Visually, you can see that the activity done during this sprint increased the health scores, while the total BFPs have only grown by 1.2%.

This delta analysis is especially appreciated by contributors of large legacy apps who want to measure the impact of their effort on improving software health. Since fixing 100 issues within a 1-MLOC application can’t really be tangible when looking at the overal score variation, having KPIs available only on modified and added files let development and maintenance teams concentrate on the “living” (i.e., changed) part of their code base.

Quick trick: when scanning your code with the Local Agent or the command line, ensure the folder structure remains the same across versions, as it is used to cipher scanned files. C:\src\version1.0\myfile.java will be considered as a different as D:\src\version1.5\myfile.java, and myfile.java will be considered as a new file in the second scan.

That’s all folks! I’m confident you’ll be happy with this feature. Don’t hesitate to share your feedback and product experience with our product team!